The Peril of Pillow-Proof Reasoning: How AI’s Shortcut Could Dull Our Minds

A new and subtly alarming study suggests that turning to artificial intelligence for rudimentary intellectual tasks—things like basic math or reading comprehension—can begin to erode our own cognitive abilities in mere minutes. The research, which involved 1,200 participants, presented a clear, two-path experiment: one group tackled problems using their own minds, while another was given assistance from AI. The results were striking. Initially, the AI-assisted group sailed through problems with greater accuracy, a predictable outcome. However, the crucial test came when the digital crutch was silently taken away. Those who had grown accustomed to AI help suddenly faltered; they answered more questions incorrectly, skipped them altogether, and showed a marked decline in the persistence needed to wrestle with a challenge. This immediate drop in self-reliance points to a troubling dependency, suggesting that even brief encounters with AI for simple tasks can short-circuit the very mental muscles we need to build and retain knowledge.

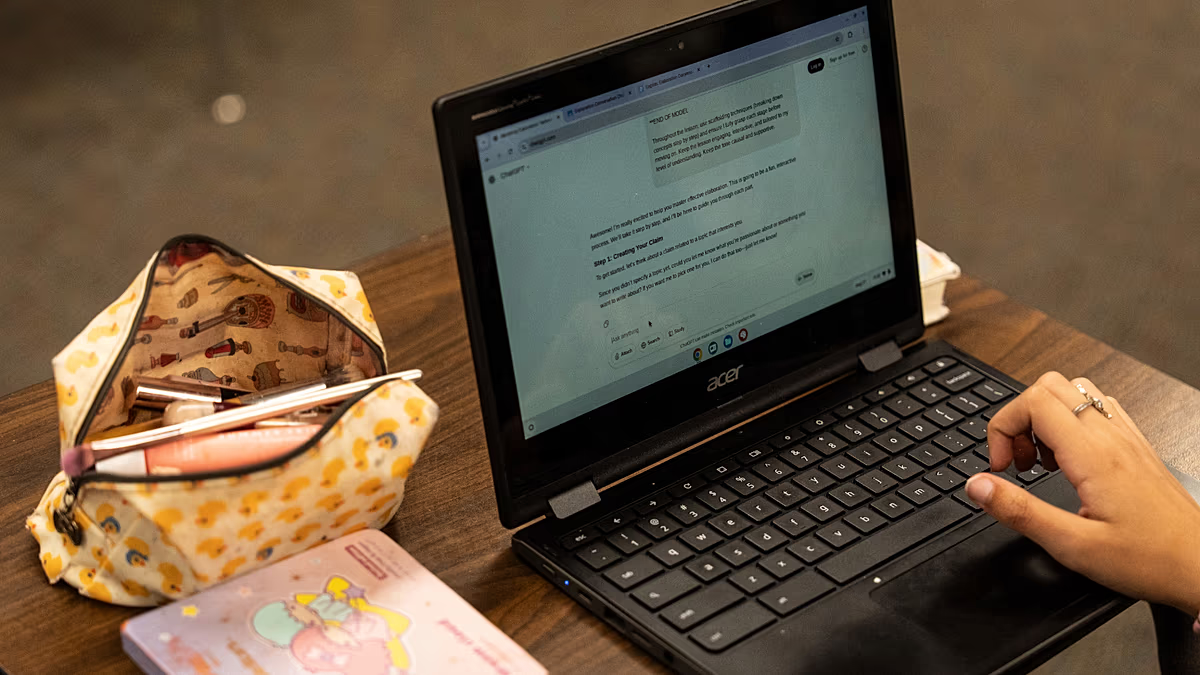

This phenomenon extends beyond mere laziness into what researchers are terming “cognitive debt.” Much like financial debt, cognitive debt accrues quietly, with each AI-assisted shortcut seeming harmless in isolation but building toward a significant deficit in learning and recall. A parallel study from MIT observed this when individuals used AI like ChatGPT to compose essays; later, they struggled to remember or even recognize their own writing. The tool had done the heavy lifting of synthesis and articulation, bypassing the cognitive processes that cement understanding in our memory. The danger is a gradual, almost imperceptible hollowing out of competency. We may produce a correct answer or a coherent paragraph faster, but we fail to engage in the “productive struggle”—the mental friction that transforms information into durable, personal knowledge. The outcome is a superficial fluency that masks a deepening ignorance.

The mechanics of this decline are rooted in changed expectations and eroded patience, creating a kind of cognitive “boiling frog” effect. The study found that AI conditions users to expect instant, flawless solutions. When these immediate answers are gone, the unaided task suddenly feels laborious, slow, and frustrating. Our internal calibration for how long problem-solving should take becomes distorted. What was once a normal five minutes of focused effort now feels like an arduous chore. This leads people to give up faster, denying themselves the essential experience of working through confusion to find clarity. Like the proverbial frog in slowly heating water, each individual decision to use AI for a small task feels inconsequential. Yet over months and years, the cumulative effect could be a generation that has lost not just specific skills, but the fundamental “disposition to struggle productively” without technological support.

This research underscores a critical distinction between a tool and a mentor. Currently, many AI systems are designed as supremely efficient tools, optimized to deliver the fastest answer with the least user effort. But a good teacher or mentor operates differently; they offer guidance, ask probing questions, and provide frameworks for thinking, but they stop short of simply providing the solution. They understand that the struggle itself is pedagogical. The researchers involved in this study propose that future AI should be built with these long-term human objectives in mind. Imagine an AI tutor that could recognize when a user is on the cusp of a breakthrough and chooses to offer a hint rather than the answer, or that encourages elaboration instead of finishing a sentence. The goal would be to design technology that supports the development of human capability rather than its replacement.

The implications ripple far beyond the classroom or individual productivity. In a professional world increasingly integrating AI, these findings sound a cautionary note. If employees become accustomed to outsourcing basic analysis, drafting, or problem-framing to AI, their ability to think critically, adapt to novel situations, or catch subtle errors may atrophy. Organizational resilience could be weakened if foundational knowledge and the capacity for unaided, deep work are diminished across a team. The challenge for the future will be to establish a symbiotic relationship with AI, one where it amplifies our unique human strengths—like creativity, strategic thinking, and ethical judgment—while we vigilantly protect and exercise our core cognitive muscles through deliberate, unassisted practice.

Ultimately, this study is not a case for Luddism but for mindful engagement. Artificial intelligence holds tremendous promise for tackling complex problems, enhancing creativity, and automating truly tedious work. The peril lies in allowing it to perform the basic cognitive calisthenics that keep our minds agile and resilient. The message is clear: to remain competent, adaptable thinkers in an AI-augmented age, we must consciously preserve spaces for unaided thought. We must value the struggle, embrace the momentary frustration of a difficult paragraph or a tricky equation, and recognize that in that struggle lies not just the answer, but the enduring strength to find the next one on our own. The convenience of the present must not mortgage the intelligence of the future.